To reference this article

What Is Operant Behavior And How To Study It, Maze Engineers (2022). doi.org/10.55157/ME2022127

Operant behavior describes a type of voluntary goal-directed actions in animals based on the repercussions of previous occurrences. It develops when animals learn to specifically respond to recurring situations based on the outcome of their past experience.

American psychologist B.F. Skinner was the first to use operant to describe the behaviors he observed in his landmark experiments in laboratory animals. Operant behavior and conditioning refine the nuance between conscious and unconscious behavioral responses, which influence psychology, and applied behavior analysis, and improve our understanding of addiction, substance dependence, child development, and decision-making.

What is Operant Behavior?

Animals, including humans, can develop new sets of actions in response to events or stimuli. Behaviors can develop from learning processes when the animals associate two or more incidents after they have repeatedly taken place concurrently.[1]

Operant behavior is a type of learned behavior where animals associate a specific event or stimulus with its consequence and willingly change their behavior. The association occurs after the animals have repeatedly faced the same outcome of the action, which is reflected in the change in the animals’ tendency to perform a certain action.

In other words, operant behavior emerges as an adaptive strategy after the consequence of a particular action is realized. Animals learn to change their behavior so that they can achieve favorable results or avoid undesirable repercussions that they learned from past experiences.

For example, trainers can teach an animal to sit by showing it treats before giving it a physical (‘sit’ gesture) or verbal (‘sit’ command) cue such. As soon as the animals sit, the trainers give them the treats. When the exercise is repeated several times, the animals are trained to sit when they see the gesture or hear the command because they associate it with a desirable consequence.[1-2]

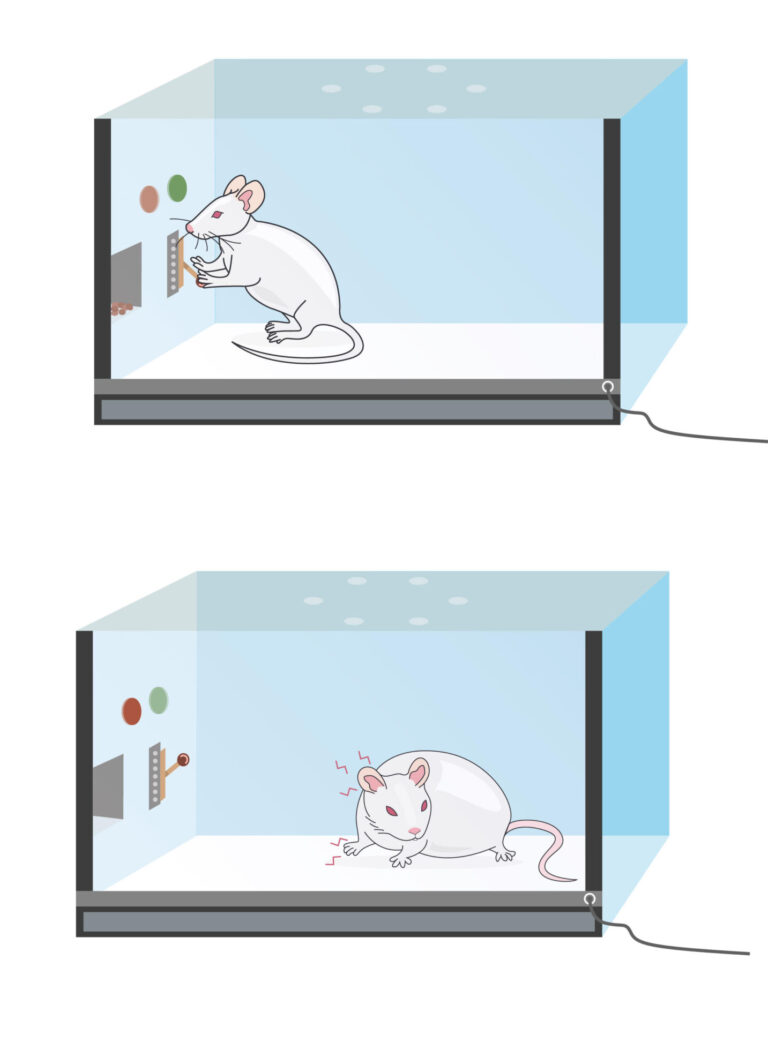

A rodent learns that pressing a lever leads to a shock after repeatedly performing the same action.

Are you looking for a Operant Devices for your research?

The History of the Study of Operant Behavior Studies

The word operant was first coined by American psychologist and behavioralist B. F. Skinner in the late 1930s. The idea was built upon the law of effect, which describes how some behaviors are learned and displayed in animals.

Thorndike’s law of effect illustrates how animals learn from their actions

The law of effect was proposed by American psychologist Edward Thorndike in 1898. It was based on his puzzle box experiment, which demonstrated how animals learn new tasks.

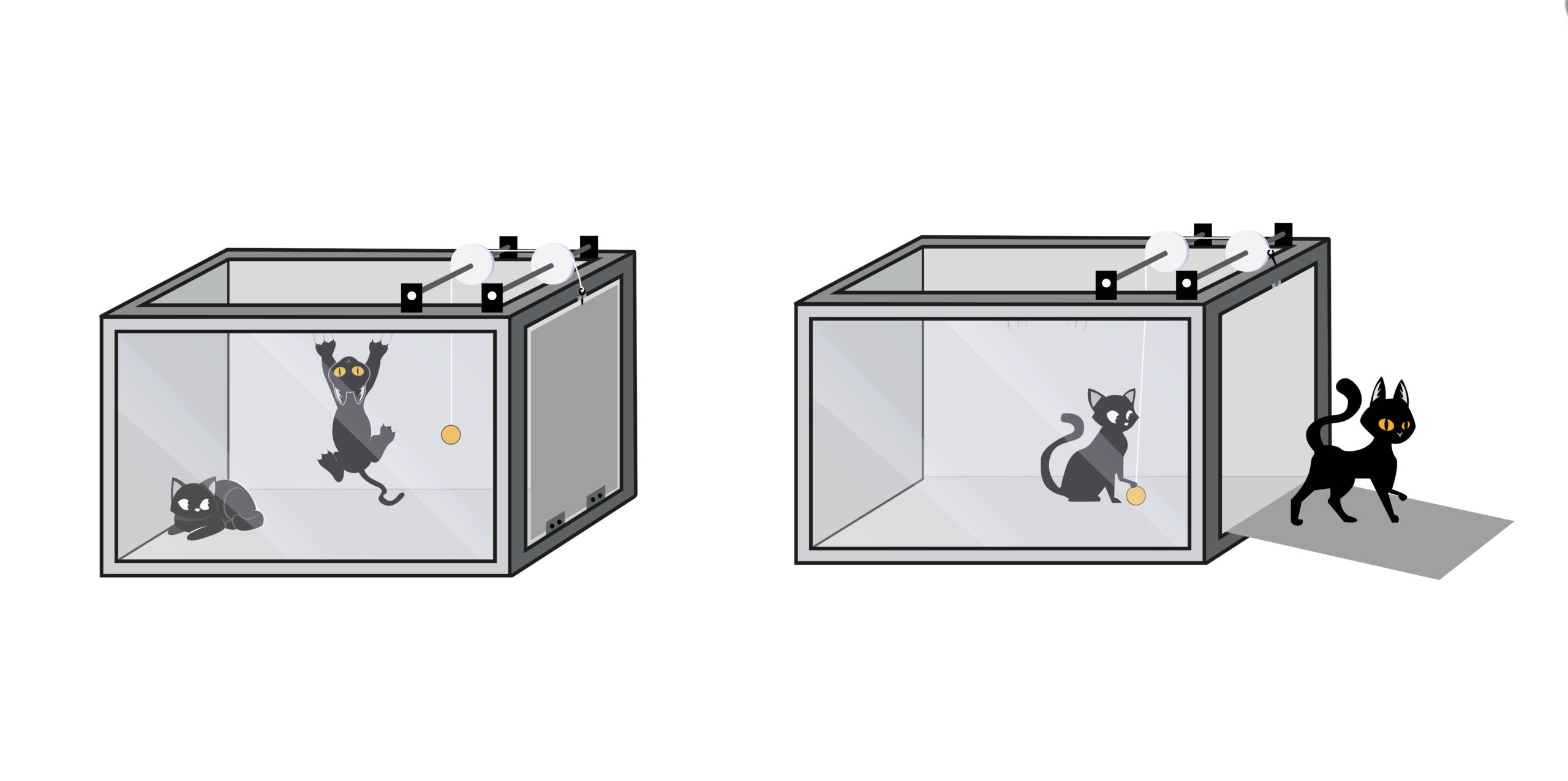

In Thorndike’s puzzle experiment, cats were individually placed inside a puzzle box where it could escape by performing tasks such as pushing a lever or pulling a string. After it was placed inside, the cat was persuaded to escape the box by the food outside.

When the cat was first trapped in the box, it was disconcerting and could perform the task by accident. After several trials, the cat became less erratic, and the time it took to perform the escape task became shorter, leading Thorndike to set forth the idea that animals likely to repeat behavior that resulted in rewarding consequences, but less likely to repeat behavior that resulted in undesirable repercussion.[1,3]

In Skinner’s experiments, cats learned to push the levers to escape cages.

Skinner’s landmark experiments demonstrate how behavior can be trained

The idea that consequence can influence behavioral responses was demonstrated in Skinner’s landmark experiments with animals in the operant chamber, also called the Skinner box.

Skinner individually placed animals such as rats and pigeons in the chamber where they could interact with a pressing lever (or pecking disk for pigeons). The interaction could result in food being dispensed inside the chamber or the removal of noises or light.

In his experiments, Skinner first shaped the caged animal to push the lever by dispensing food every time the animal moved close to the lever. Once the animal learned to perform the target behavior (pushing the lever), the frequency that the animal push the lever when it was subjected to different consequences was recorded. Subsequently, he found that the animal was inclined to push the lever when the action resulted in rewarding consequences such as food in the dispenser or the removal of noise from the chamber than when it led to undesirable repercussions such as disturbing noise.[1,2]

Operant Behavior and Operant Conditioning

The behavior observed in Thorndike’s and Skinner’s experiments was in stark contrast to respondent behavior reflected in Pavlov’s experiment with dogs.

Specifically, animals in Thorndike and Skinner’s experiments exerted their behavioral responses consciously. They learn from past consequences in similar situations and knowingly behave to satisfy their needs. In other words, animals can control their actions unlike the dog in Pavlov’s classical conditioning experiment.[1,3]

Operant conditioning is achieved by reinforcement or punishment

Operant behavior can be forged and modulated by operant conditioning, which awards the animals with the consequence of a specific behavior. The award that encourages the target behavior is termed reinforcement; whereas the award that discourages it is called punishment.

Reinforcement can be positive when favorable stimuli such as food are delivered to the animals soon after they perform the desired behavior. They can be negative when aversive stimuli like loud noise are removed after the animals exhibit the desired behavior.

Along the same line, punishment can be positive when aversive stimuli are given to the animals after they perform unwanted behavior. It can be negative when desirable stimuli are taken away soon after animals exhibit the unwanted behavior.

A reinforcement schedule is an operant conditioning tool

Operant behavior is reversible and requires a reinforcement schedule so that it does not extinct. Put differently, once acquired, operant behavior must be reinforced or punished from time to time so that the animals maintain the target behavior. Otherwise, the animal will stop displaying the target behavior even if the same trigger, reinforcers, or punishers are present.

A reinforcement schedule is a recognizable pattern of how reinforcers and/or punishers are administered, which teaches the association between the behavior and its aftereffect.[1-2]

It can be constructed as:

Time-based schedule

This protocol centers on the time between when the verbal or physical cue is stimulated before reinforcers are delivered to the animal. It is the most popular type of reinforcement schedule, which emphasizes on the temporal control of operant behavior using a stimulus as a time marker for the associated behavior.[2]

Time-based reinforcement schedule can be designed as:

- Fixed Interval (FI) or inner reinforcement time-based reinforcement schedules, supply reinforcers and/or punishers at a predictable time after the trigger and target behavior.

Animal training is a classic example of an FI reinforcement schedule. It is thought to be the simplest form but the most susceptible to extinction.[1,4]

- Variable Interval: Also known as trial time-to-reinforcement or peak procedures where the time between receiving a stimulus and reinforcers or punishers are variable.[1]

Both approaches can be combined in the peak-interval (PI) procedure. Here, animals are subjected to an FI schedule to build an association between the time marker, target behavior, and the award. Afterward, the award is withheld before a fixed delay for the time marker, the target behavior, and its consequence is introduced. This type of schedule is typically used to study internal timing and reward anticipation.[4]

The ratio schedule

A ratio schedule uses the number of responses as a marker for the delivery of reinforcers or punishers, which can be arranged as fixed or variable.

In a fixed ratio schedule, the number of times that animals perform the target behavior before they are awarded the consequence is clearly defined. For example, a salesperson must achieve the expected sales number before a sale bonus is given.

In the variable ratio schedule, the award is given for the target behavior after an unspecified number of times it is displayed. In other words, the animals learn to associate the target behavior with a reward but do not know how often they must perform the task before the reward is realized. This type of reinforcement schedule is regarded to be the most constructive and least prone to extinction.[1]

A good illustration of a variable ratio schedule is gambling. Gamblers are aware of the reward associated with the activity although they do not know how many times they have to wager before receiving the reward.[1]

How to Study Operant Behaviour

Studies into operant behavior can be undertaken using operant chambers. Animals are housed in a chamber where they are subjected to reinforcement, punishment, and other relevant stimuli. The underlying idea is to manipulate the behavior of the captive animal using a testing protocol that embodies a reinforcement schedule specifically designed to address a certain behavior or question at issue.[5]

Features in Operant Chambers

At the most basic, operant chambers typically consist of:

- A plastic box that keeps the animal during the trial and provides reinforcers, punishers, and relevant stimuli.

In general, the plastic box should be large enough to house the animals and allows daily maintenance such as feces removal without the need to move the animal from the box. The inside of the box must have sufficient brightness during the day so that a day/night cycle can be established for the animal.

Most if not all chambers will contain a trough and controllable food dispenser on one side of the wall, which may be equipped with a recording head to automatically record the number of times the captive animal approaches the trough. Some chambers may contain lightbulbs, a speaker, or electrical wires in addition to the food trough and dispenser. These components are reinforcers or punishers, depending on the testing protocol.

In addition, the plastic box should be made from soundproof material so that only sound that stimulates the captive animal is intentional and does not come from the surroundings outside the chamber.

- Operants are components inside the chamber that the captive animal is shaped and trained to manipulate. The interaction with the operant such as a nose poke, lever press, string, and pecking disk leads to a specific consequence, which is dictated by the testing protocol.

Nowadays, operants are connected with sensors that implement the reinforcement schedule and automatically record the behavior of the captive animal.

- Software controls the operant chambers according to the protocol of choice. It often accompanies the hardware (the operant chamber). In most cases, the software also provides one or more standard protocols which are compatible to the accompanied chamber(s). Some may allow users to configure the reinforcement schedule.

- Nowadays, many software has the capacity to control one or multiple sets of operant chambers. This ability allows researchers to run a number of studies in parallel or conduct different protocols simultaneously, saving the overall time required for the study.

Examples of Modified Operant Chamber and Protocols

Contemporary standard operant chambers are modelled after the original Skinner’s box with additional automated data collection and adjustable conditions, including additional nose pokes and levers that increases the task difficulty. The modifications allow studies into strategy shifting and reverse learning in addition to cognitive and learning skills.

Most modern-day chambers are modified to accommodate protocols developed to undertake specific conditions.

For example,

- The Self-administration Chamber is specifically designed to investigate addiction, substance dependence, and operant conditioning. The chamber has a shock floor and a syringe system in addition to components in standard operant chambers.

- The step-Down Avoidance Apparatus contains a raised vibrating platform in the chamber center. It is designed for experiments testing aversive memory and contextual learning.

In Conclusion

Operant behavior and conditioning provide help us understand why and how certain behavior manifests in animals and humans. Specifically, they provide the basis for the understanding of how past experiences can affect the decision-making process and turn a particular behavior into habits or inclinations. They are also starting points for neurobiological studies that look into how certain activities or chemicals affect the brain and influence cognitive ability. To dive deep into these topics, check out our articles on positive reinforcement using operant conditioning, negative reinforcement using operant conditioning, positive punishment using operant conditioning, drugs that block operant conditioning, and enhancement of operant conditioning by drugs.

Experimentally, operant behavior and conditioning can be assessed using operant chambers that have been modified for protocols that address specific questions or conditions. If you are looking for a versatile operant chamber for your behavioral adaptation and cognitive neurobiology studies, check out our standard operant chamber packages with customizable nose poke, lever, and exposure conditions!

References

- Spielman, R.M., Dumper, K., Jenkins, W., et al. “Chapter 6.3 Operant Conditioning” Psychology, OpenStax, 2014, https://openstax.org/books/psychology/pages/6-3-operant-conditioning

- Staddon, J.E.R. and Cerutti, D.T. “Operant Conditioning” Annual Review in Psychology, 2003: 54(1), pp. 115-144

- Chance, P. “Thorndike’s Puzzle Boxes and the Origins of the Experimental Analysis of Behavior” Journal of the Experimental Analysis of Behavior, 1999: 72, pp. 433-440

- Balcı, F. and Freestone, D. “The Peak Interval Procedure in Rodents: A Tool for Studying the Neurobiological Basis of Interval Timing and Its Alterations in Models of Human Disease” Bio-protocol, 2020: 10(17): e3735

- Rossi, M. and Yin, H.H. “Methods for Studying Habitual Behavior in Mice” Current Protocol in Neurosciences, 2012